Physicist: About why you’d expect: they’re just too damn small and too damn far away. Nothing fancy. That’s not to say that we can never get images, just that you need to be a lot closer. The lunar landers are each about 4 meters across and about 384,400,000 meters away, which makes them about as hard to see as a single coin from a thousand miles away. You gotta squint.

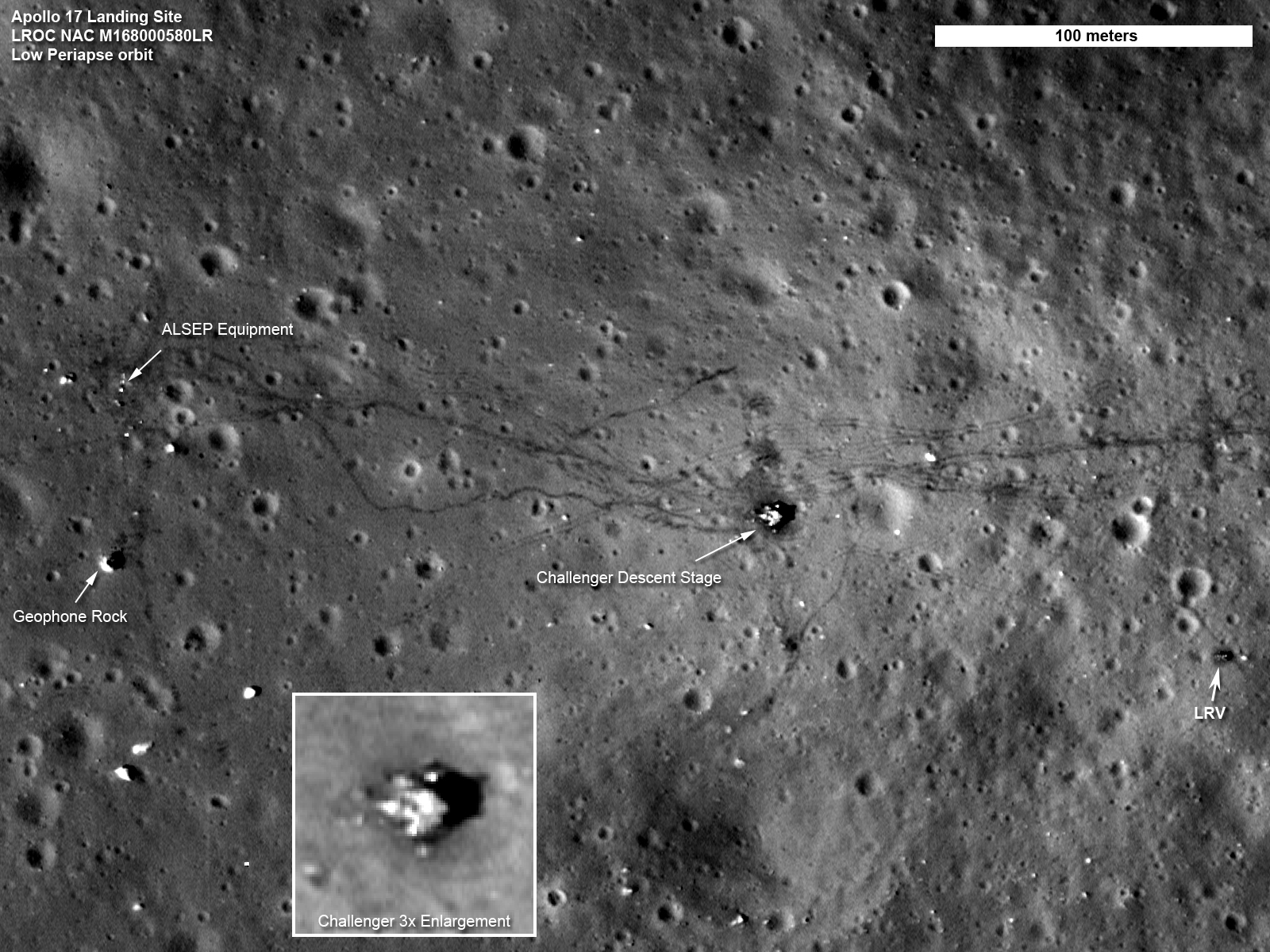

A picture of the Apollo 17 landing site taken by the Lunar Reconnaissance Orbiter which, as the name implies, was in orbit around the Moon when it took this presumably reconnaissance-related picture. Those meandering lines are tracks left by a lunar rover. Click to enlarge.

In fact, a big part of why we (humans) bother to go to the Moon, other planets, and space in general is that photographs from Earth leave a lot to be desired. In addition to being far from everything else, here on the surface of Earth we’re stuck at the bottom of an ever-moving sea of air. In exactly the same way that the surface of water scatters light, air makes it difficult for astronomers to practice their dread craft.

Also, not for nothing, telescopes are terrible at retrieving material samples.

You and every telescope on Earth (and the Hubble Telescope in low Earth orbit) are all about a quarter million miles from the Moon and the landing sites thereon. If we ever get around to building something bigger on the Moon, like mines or cities or president’s heads, then we shouldn’t have nearly as much trouble seeing it from Earth.

Answer Gravy: It turns out that the best/biggest telescopes we use today on Earth can’t detect things the size and distance of the lunar landers using visible light. This isn’t due to poor design; the devices we’re using now are, in a word, perfect. They literally cannot be made appreciably better (at detecting visible light). The roadblock is more fundamental.

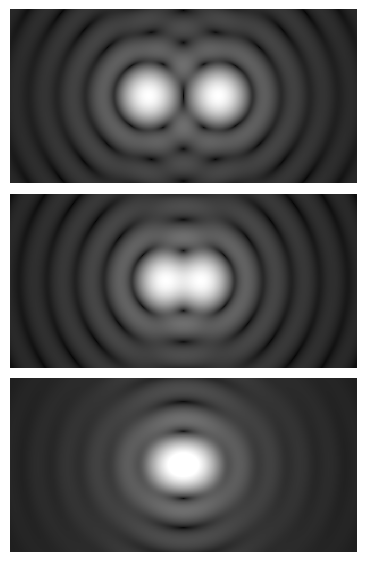

The “resolving power” of a telescope, is described in terms of whether or not you can tell the difference between a pair of adjacent points. If the two points are too close together, then you’ll see them blurred together as one point and they are “not resolved”. If they’re far enough apart, then you see both points independently.

Whether it uses mirrors or lenses, the resolving power of every telescope is limited by some fundamental constraints determined by the wavelength of the light that’s being observed and by the size of the aperture.

Every point in every image is surrounded by a rapidly diminishing “Airy disk” which are a symptom of light being wave-like. This is only a problem really close to the diffraction limit. You don’t see these when you take a picture on a regular camera because these rings are smaller than the individual pixels in the camera’s CCD (by design).

Because light is a wave it experiences “diffraction” which makes it “ooze around corners” and generally end up going in the wrong directions. But the larger a telescope’s opening, the more the light waves have a chance to interfere in such a way that they propagate in straight lines, which makes for cleaner images where the light ends up more-or-less where it’s supposed to be when it gets to the film or CCD or your retina or whatever.

It turns out that the relationship between the smallest resolvable angle, θ, the wavelength, λ, and the diameter, D, of the aperture is remarkably simple:

Visible light has a wavelength of around 0.5 micrometers (about 2,000,000 per meter) and the largest visible-spectrum telescopes on Earth are about 10 meters across (Hubble is a more humble 2.4m across). That means that the absolute best resolution that any of our telescopes can hope to achieve, under absolutely ideal circumstances, is about . Or, for the angle buffs out there, about 0.01 arcseconds. This doesn’t take into account the scattering due to the atmosphere; we can do a little to combat that from the ground, but our techniques aren’t perfect.

By carefully looking at how the atmosphere distorts a laser beam shot upwards from a telescope on the ground, we can take into account how the atmosphere affects light coming into the telescope from space.

The lunar landers are a little over 4 meters across (seen from above) and are about 384,403,000 meters away. That means that the landers subtend an angle of about 0.002 arcseconds. In order to see this from Earth, we’d need a telescope that is, at absolute minimum, about 200 meters across. If we wanted the image to be a more than a single pixel, then we’d need a mirror that’s a few miles across.

So, don’t expect that anytime soon.

Good morning from the Big Island of Hawaii.

Nice post – and a question I get asked often (mostly by lunar lander hoax believers).

I work for a world class observatory (Subaru Observatory). I have one comment about your statement:

“It turns out that the best/biggest telescopes we use today on Earth are pretty close to being able to detect things the size and distance of the lunar landers. This isn’t due to poor design; the devices we’re using now are, in a word, perfect. They literally cannot be made appreciably better. ”

Subaru has, what is considered to be, the largest single piece mirror that can be practically made, at 8.2 meters (27 ft) and weighing a whopping 25 tons (try slewing that around quickly). Larger than that and the mirror properties themselves become problematic (as it is, we have servos in the mirror that correct for distortions that happen due to the weight at various angles).

So observatories such as Keck use segmented mirrors. One very large mirror made from a large number of smaller, hexagonal mirrors (they use software to remove the seams).

With such a design, there are no real limits to size (other than ability to move it).

This is why we have things coming along like TMT – a thirty meter telescope (98+ feet).

The light gathering power of a 98 ft mirror, versus our 27 ft mirror, is staggering.

Now let’s look at the Instruments. Modern telescopes are not like those of long ago, with aged scientists peering through long tubes.

Our telescopes are more like a giant tube waiting for something to happen. That is where the scientific instruments come in. We can hook various sensitive instruments to various areas (prime focus, cassegrain focus, and two nasmyth points). And these instruments can each be different (IR, Visible, spectrum, etc etc).

When an astronomer makes a proposal for viewing, not only do they tell us what target they want to view, but they specify which instruments they want to use to do the viewing.

Perhaps they are looking for exoplanets, and want a spectrograph. Or distant galaxies, so a dust busting IR detector should be involved, etc.

The instruments are constantly improving. We are deploying a new instrument right now that is magnitudes more sensitive than the one it is replacing.

And that gets us to Adaptive Optics. All the world class observatories are using Adaptive Optics for not only artificial guide stars, but also for removing atmospheric distortion (goodbye twinkle twinkle little star – no more twinkle).

We are now on our 3rd generation AO system, with the fourth being tested. Each generation is leaps and bounds better than the previous.

AO lets us take images that rival Hubble, which sits outside the atmospheric well.

So the comment “They literally cannot be made appreciably better” is vastly incorrect. They can, and are constantly being made appreciably better.

It is true that at some point, observatories on Earth will be mostly replaced by space based observatories. But at our current point the cost and practicality of such a goal remains in the future.

The good news is, things like segmented mirrors can be applied directly to space based solutions – making our new generation of telescopes a good test bed for technology that can be deployed in space.

Happy New Year!

I’ve read that the same limitations exist in creating minimum feature sizes on computer processors. However, despite the fundamental limit being about 50 nanometers using their current wavelengths of light, they are able to decrease the size down significantly (current best in consumer technology is 14 nm). They use a process called computational photolithography. I don’t really understand the process, but it is there anything here that could enhance the resolving power of telescopes?

https://en.wikipedia.org/wiki/Photolithography#Resolution_in_projection_systems

https://en.wikipedia.org/wiki/Computational_lithography

Locutus:

“They use a process called computational photolithography. I don’t really understand the process, but it is there anything here that could enhance the resolving power of telescopes?”

Not unless you are looking down the wrong end of the telescope 🙂

I’m being serious in the glib answer too. Photolithography and similar techniques are extremely important in astronomy – but not perhaps where you were thinking.

These techniques are directly responsible for our constantly improving and enhanced detectors – the devices that do the actual observing.

Smaller and smaller pixels let us get more resolution and sensitivity in our light gathering area.

So yes, such techniques are very important in astronomy – though the research and end results are being carried out by companies not directly involved in astronomy (we merely reap the bounty).

David:

So practically, what does this mean? Do we get better quality images maybe in terms of color (or less fuzz or something) without actually increasing the resolving power?

Locutus:

It can mean a number of things, depending on the detector. It can mean more pixels – so greater resolution packed into the same area. It can mean more sensitivity – so the ability to detect weaker photons. It can mean increased wavelength range (eg., ability to see IR better than before, etc).

As an example. I am currently part of a team redesigning one of our instruments. This version is so much more sensitive than previous versions that we are actually detecting alpha particles (radiation) from a coating on one of the lenses. Same lens, just a more sensitive detector than the previous version (since they are alpha particles, they are easy to block with a very thin sheet of glass).

Telescopes are all about light gathering. Larger mirrors mean more light. Better detectors mean more light.

David:

Thank you. That’s very interesting.

Even the little mathematics you displayed here[tita is approx equal to 1.22(0.5*10^-6)approx equal to 0.00000006rads]puts me off balance talkless of the topics you discussed in the question segment which includes”how a scientist convert ideas into maths formula”.

THANKS ALOT!

HA this is all rubbish. NASA hasn’t landed people on the moon but the Russians landed there in 1962 but decided to set up a nuke test site there. That’s what those Lunar Transient Phenomena are. Most of them are nuke tests on the moon. They have built their bases underground too so the orbiters can’t see them. By the way all these lander pics are fakes. All those moon rocks are fakes too.

Con:

As an ex-NASA scientist – I can assure you that we did land people on the moon. Multiple times.

But go ahead and believe the woo-woo if you want.

IT IS IMPOSSIBLE TO SEE SOMTHING THAT DOSENT EXIST

You say in this article that we CANNOT see the lunar landing sites from Earth or even the Hubble Telescope. Then how do you explain this (goto 8:07 timestamp)? Are you lying to us or are you just lacking the knowledge to the contrary? These aren’t even the Apollo sites either. This alone should pull you out of your lying or disbelieving state but I assume it probably won’t. But please, PLEASE at least respond to this so we know that you at least aren’t cowardly in your denial.

No offense, just had enough bullshit,

Richard Purcell

P.S. You can plainly see the HUMAN tracks along with the vehicle tracks. You may publish this email & my address.

https://youtu.be/UPCtUUnkGE8

@Richard Purcell

To get high-resolution pictures of the Moon we use telescopes that are in orbit around the Moon. The USA, China, Russia, Japan, India, and the ESA have all put orbiters around the Moon to take pictures, map it, and study it. You can find a list of Moon missions here. The image you pointed out is of the Apollo 15 landing site, most likely taken by the Lunar Reconnaissance Orbiter. You can see all of the Apollo landing sites here.

How can we get a satellite Image of the top of my car , but can’t get a satellite image of any of the lunar modules / landing stages ?can’t we turn the satellites on to the moon ?

Would it not be a great publicity stunt for one of the space shuttles orbiting the moon to send back pictures of any of the buggies etc.

I don’t want to be a sceptic but I’d love to see some proof of what we’ve achieved up there?

With modern day photos of little helicopters on MARS ! Surely we can see a 9metre wide landing platform on the moon ?

Thanks

“How can we get a satellite image of the top of my car, but can’t get a satellite image of any of the lunar modules?”

Surveillance satellites orbit Earth at a height of around 100 miles. The moon, on the other hand, is almost 240,000 miles away. Thinking that getting 1/2,400th of the way closer to the moon would help you take

better photos of small objects is like thinking that standing on your tiptoes would help you better spot a gnat at the top of a flagpole.

If we send something similar to an earth-orbiting surveillance satellite to the moon, however, I don’t see why we couldn’t spot it, especially since we could probably achieve a lower orbit around the moon the weekend around the Earth, because of the lack of atmosphere.

I’m just a ordinary individual and I can’t do all of the fancy mathematical formulas and equations..

But just ordinary common since tells me that none of what we are or have been told about our planet or whatever this is we live in whether it’s a glob or whether it’s flat or like I think it’s all just a bunch of lies that we have been made to believe simply because we have all been made to live all our lives in the dark while our government makes decisions and does things that all of humanity is left in the dark just as humanity it’s self has been left in the dark ..

The truth is none of your college degrees or your fancy schools or your daddy’s money will ever tell you what none of us know forsure because the truth is we are all stupid ass idiots that really don’t know the first thing about what we are where we are or what is outside of this place or if there even is a outside of this place..

And for the smart ass that made the comment that you know for a fact that all that happened in so called space everyone knows that you idiots will say whatever they tell you to say ..

But me personally I don’t think we live in a place that we have all been led to believe we live in ..

Because people wake up if we were what they say and our place we live In is what they say it is ..

Then why would there be so many things hidden and so secretive and why would there need be anything hidden from. The people that pay for everything and those people are every tax paying citizen..

Think about it if they was telling the truth then why are we kept in the dark about every dam thing ..

Use your college degrees and your fancy math to answer that to the intire nation please inlighten me..

If you who purport to be educated scientists continue to ignore probably 150 pcs of evidence that we never set foot on the moon (one example & #1 reason we could not have gone, is a little phenomenon called the Van Allen Radiation Belts), then you are neither educated nor a true scientist. NASA released two videos, both now unavailable on YT, telling us that we cannot now, take a human safely beyond low earth orbit, leo, due to one factor, the radiation of the VAB. So don’t try & meander around that with a lot of mathematical doubletalk, I’ve seen & read it all & the bottom line is it is impossible with today’s technology, to add the needed equivalent of 4″ of lead or 4′ of water to insulate the craft to protect them